THIS is why we can’t have nice things…

So this is where our future ram buy went into? Fuck this planet then 🤣

Jesus fucking Christ, 288GB. And this is why I can’t have 16?

And you have to buy a rack of them with 72 of them.

And none of us will be allowed to have them

Only datacenters and only fortune 500 companies will be able to use anything Nvidia

I mean if you have the 3 million to spend on a rack of them, I am sure they would allow you to have them.

I do wonder what happens a few years down the road when everyone are replacing their gpus with latest and greatest variants what happens to the old racks? Do they get sold for pennies on the dollar because everyone else doing AI wants cutting edge?

But can it run Crysis?

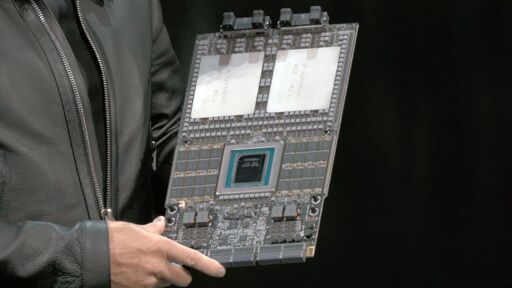

Nvidia’s Vera Rubin platform is the company’s next-generation architecture for AI data centers that includes an 88-core Vera CPU, Rubin GPU with 288 GB HBM4 memory, Rubin CPX GPU with 128 GB of GDDR7, NVLink 6.0 switch ASIC for scale-up rack-scale connectivity, BlueField-4 DPU with integrated SSD to store key-value cache, Spectrum-6 Photonics Ethernet, and Quantum-CX9 1.6 Tb/s Photonics InfiniBand NICs, as well as Spectrum-X Photonics Ethernet and Quantum-CX9 Photonics InfiniBand switching silicon for scale-out connectivity.

The buzzwords make my head hurt. Sounds like a copypasta

288 GB HBM4 memory

jfc…

Looking at the spec’s… fucking hell these things probably cost over 100k.

I wonder if we’ll see a generational performance leap with LLM’s scaling to this much memory.

Current models are speculated at 700 billion parameters plus. At 32 bit precision (half float), that’s 2.8TB of RAM per model, or about 10 of these units. There are ways to lower it, but if you’re trying to run full precision (say for training) you’d use over 2x this, something like maybe 4x depending on how you store gradients and updates, and then running full precision I’d reckon at 32bit probably. Possible I suppose they train at 32bit but I’d be kind of surprised.

Edit: Also, they don’t release it anymore but some folks think newer models are like 1.5 trillion parameters. So figure around 2-3x that number above for newer models. The only real strategy for these guys is bigger. I think it’s dumb, and the returns are diminishing rapidly, but you got to sell the investors. If reciting nearly whole works verbatim is easy now, it’s going to be exact if they keep going. They’ll approach parameter spaces that can just straight up save things into their parameter spaces.

Yeah they’re going to cost as much as a house.

I think we’ll see much larger active portions of larger MOEs, and larger context windows, which would be useful.

The non LLM models I run would benefit a lot from this, but I don’t know of I’ll ever be able to justify the cost of how much they’ll be.

Question is, how long before it makes it to the next DGX Spark? Some people don’t have $10B to burn.