Truer words have never been said.

AI has a vibrant open source scene and is definitely not owned by a few people.

A lot of the data to train it is only owned by a few people though. It is record companies and publishing houses winning their lawsuits that will lead to dystopia. It’s a shame to see so many actually cheering them on.

So long as there are big players releasing open weights models, which is true for the foreseeable future, I don’t think this is a big problem. Once those weights are released, they’re free forever, and anyone can fine-tune based on them, or use them to bootstrap new models by distillation or synthetic RL data generation.

For some reason the megacorps have got LLMs on the brain, and they’re the worst “AI” I’ve seen. There are other types of AI that are actually impressive, but the “writes a thing that looks like it might be the answer” machine is way less useful than they think it is.

most LLM’s for chat, pictures and clips are magical and amazing. For about 4 - 8 hours of fiddling then they lose all entertainment value.

As for practical use, the things can’t do math so they’re useless at work. I write better Emails on my own so I can’t imagine being so lazy and socially inept that I need help writing an email asking for tech support or outlining an audit report. Sometimes the web summaries save me from clicking a result, but I usually do anyway because the things are so prone to very convincing halucinations, so yeah, utterly useless in their current state.

I usually get some angsty reply when I say this by some techbro-AI-cultist-singularity-head who starts whinging how it’s reshaped their entire lives, but in some deep niche way that is completely irrelevant to the average working adult.

I have also talked to way too many delusional maniacs who are literally planning for the day an Artificial Super Intelligence is created and the whole world becomes like Star Trek and they personally will become wealthy and have all their needs met. They think this is going to happen within the next 5 years.

I’d say the biggest problem with AI is that it’s being treated as a tool to displace workers, but there is no system in place to make sure that that “value” (I’m not convinced commercial AI has done anything valuable) created by AI is redistributed to the workers that it has displaced.

The system in place is “open weights” models. These AI companies don’t have a huge head start on the publicly available software, and if the value is there for a corporation, most any savvy solo engineer can slap together something similar.

The biggest problem with AI is the damage it’s doing to human culture.

Not solving any of the stated goals at the same time.

It’s a diversion. Its purpose is to divert resources and attention from any real progress in computing.

AI business is owned by a tiny group of technobros, who have no concern for what they have to do to get the results they want (“fuck the copyright, especially fuck the natural resources”) who want to be personally seen as the saviours of humanity (despite not being the ones who invented and implemented the actual tech) and, like all big wig biz boys, they want all the money.

I don’t have problems with AI tech in the principle, but I hate the current business direction and what the AI business encourages people to do and use the tech for.

The biggest problem with AI is that it’s the brut force solution to complex problems.

Instead of trying to figure out what’s the most power efficient algorithm to do artificial analysis, they just threw more data and power at it.

Besides the fact of how often it’s wrong, by definition, it won’t ever be as accurate nor efficient as doing actual thinking.

It’s the solution you come up with the last day before the project is due cause you know it will technically pass and you’ll get a C.

Like Sam Altman who invests in Prospera, a private “Start-up City” in Honduras where the board of directors pick and choose which laws apply to them!

The switch to Techno-Feudalism is progressing far too much for my liking.

And those people want to use AI to extract money and to lay off people in order to make more money.

That’s “guns don’t kill people” logic.

Yeah, the AI absolutely is a problem. For those reasons along with it being wrong a lot of the time as well as the ridiculous energy consumption.

The real issues are capitalism and the lack of green energy.

If the arts where well funded, if people where given healthcare and UBI, if we had, at the very least, switched to nuclear like we should’ve decades ago, we wouldn’t be here.

The issue isn’t a piece of software.

like most of money

deleted by creator

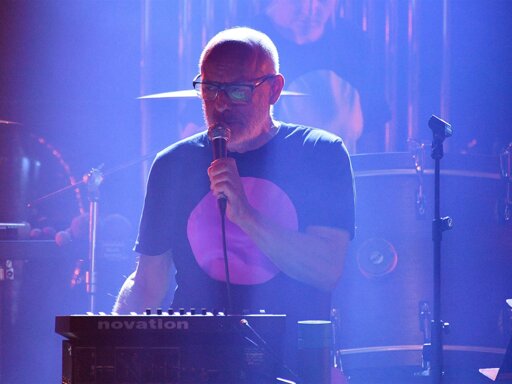

brian eno is cooler than most of you can ever hope to be.

Dunno, the part about generative music (not like LLMs) I’ve tried, I think if I spent a few more years of weekly migraines on that, I’d become better.

you mean like in the same way that learning an instrument takes time and dedication?

I don’t really agree that this is the biggest issue, for me the biggest issue is power consumption.

That is a big issue, but excessive power consumption isn’t intrinsic to AI. You can run a reasonably good AI on your home computer.

The AI companies don’t seem concerned about the diminishing returns, though, and will happily spend 1000% more power to gain that last 10% better intelligence. In a competitive market why wouldn’t they, when power is so cheap.

AI will become one of the most important discoveries humankind has ever invented. Apply it to healthcare, science, finances, and the world will become a better place, especially in healthcare. Hey artist, writers, you cannot stop intellectual evolution. AI is here to stay. All we need is a proven way to differentiate the real art from AI art. An invisible watermark that can be scanned to see its true “raison d’etre”. Sorry for going off topic but I agree that AI should be more open to verification for using copyrighted material. Don’t expect compensation though.

Technological development and the future of our civilization is in control of a handful of idiots.